Have you ever thought about how Gmail separates your emails into different mailboxes? With some exceptions, it knows how best to distinguish important emails from social ones or promotions. There is a magic behind that process, and it is called intent analysis.

You may discover some emails misplaced in your spam folder or others incorrectly classified as promotional. The magic of intent analysis is not perfect; in fact, it solely depends on the mind of whoever was behind the service’s development.

You can also see intent analysis at work in companies exploring the success of their product(s). Intent analysis can take text from reviews and turn it into useful data for a business to use and make decisions on. The same can be said for audio recordings and videos when text is generated or audio is transcribed, like when Youtube adds subtitles to a video. The logic behind the automatization is the same. Both text and audio data are considered unstructured data, which needs to be structured in some way in order to be useful.

In this article, you’ll learn more about intent analysis, you’ll review a short tutorial on how to create your first algorithm to get an insight into a text, and you’ll learn about a few different tools that can help you gain meaningful insights from your data.

NLP and the New World Real-time transcription and contextual insights

If you are a data scientist or someone curious about AI, you’ve probably heard about NLP (natural language processing). The previously mentioned magic behind intent analysis is generally created using NLP. However, NLP struggles to understand text and audio or video content without keywords. The unstructured content can be difficult to comb through, especially when it comes to human voices. Robotic voices tend to have a more consistent structure that helps NLP make predictions.

When you’re dealing with a more dynamic voice, you can use NLU natural-language understanding. The main difference between NLU and NLP is that NLU creates an ontology out of the relationship between the words in a conversation. Thus, it creates a structure from the unstructured data to define the content instead of using the words alone.

NLU Implementation Using Python

It is of general knowledge that Python is the trendiest and easiest-to-use programming language at the moment. Here, we’ll go through a simple guide on implementing NLU using Python and a Reddit sentiment data set.

So without further ado, let’s get started! You’ll want to open a Jupyter Notebook and use a Python 3.9 kernel with conda. You can take a quick read through the article “How to Set Up Anaconda and Jupyter Notebook the Right Way” if you don’t know how to do that.

Once you have opened a Jupyter Notebook, it’s time to install NLU and its dependencies:

# Write this as a first line

import sys

import os

! apt-get update -qq > /dev/null

# Install java

! apt-get install -y openjdk-8-jdk-headless -qq > /dev/null

os.environ["JAVA_HOME"] = "/usr/lib/jvm/java-8-openjdk-amd64"

os.environ["PATH"] = os.environ["JAVA_HOME"] + "/bin:" + os.environ["PATH"]

# get nlu

! conda install --yes --prefix {sys.prefix} -c johnsnowlabs nlu

# for prediction scoring

! conda install --yes --prefix {sys.prefix} scikit-learn

import nlu

import pandas #for data handling

from sklearn.metrics import classification_report

Then upload the Reddit data set:

! wget http://ckl-it.de/wp-content/uploads/2021/01/Reddit_Data.csv

Next, separate the features from the labels. Your text is what you will have on your X or what you could call your features.

train_path = '/content/Reddit_Data.csv'

train_df = pd.read_csv(train_path)

# the text data to use as features should be in a column named 'text'

columns = ['text','y']

train_df = train_df[columns]

train_df

Finally, train a deep learning classifier and check your predictions:

# load a trainable pipeline by specifying the train. prefix and fit it on a data set with label and text columns

# by default the Universal Sentence Encoder (USE) Sentence embeddings are used for generation

trainable_pipe = nlu.load('train.sentiment')

fitted_pipe = trainable_pipe.fit(train_df.iloc[:50])

# predict with the trainable pipeline on dataset and get predictions

preds = fitted_pipe.predict(train_df.iloc[:50],output_level='document')

#sentence detector that is part of the pipe generates some NaNs. So, you have to drop them

preds.dropna(inplace=True)

# y of df_train has your labels.

print(classification_report(preds['y'], preds['trained_sentiment']))

# check predictions

preds

Happy with your predictions? You have trained your first NLU algorithm. If you’re interested in learning more, consider taking a look at one of the tutorials listed in the “NLU Notebook Examples” to learn more about the meaning of your results and whether your predictions are accurate.

Modern Uses and Limitations of Intent Analysis

Intent analysis can be defined as the act of finding purpose in data. As you can imagine, there are a variety of ways that discovering this purpose could be useful for a business or application. In this section, let’s take a look at a few practical use cases for intent analysis as well as a few of its limitations.

Client Classification

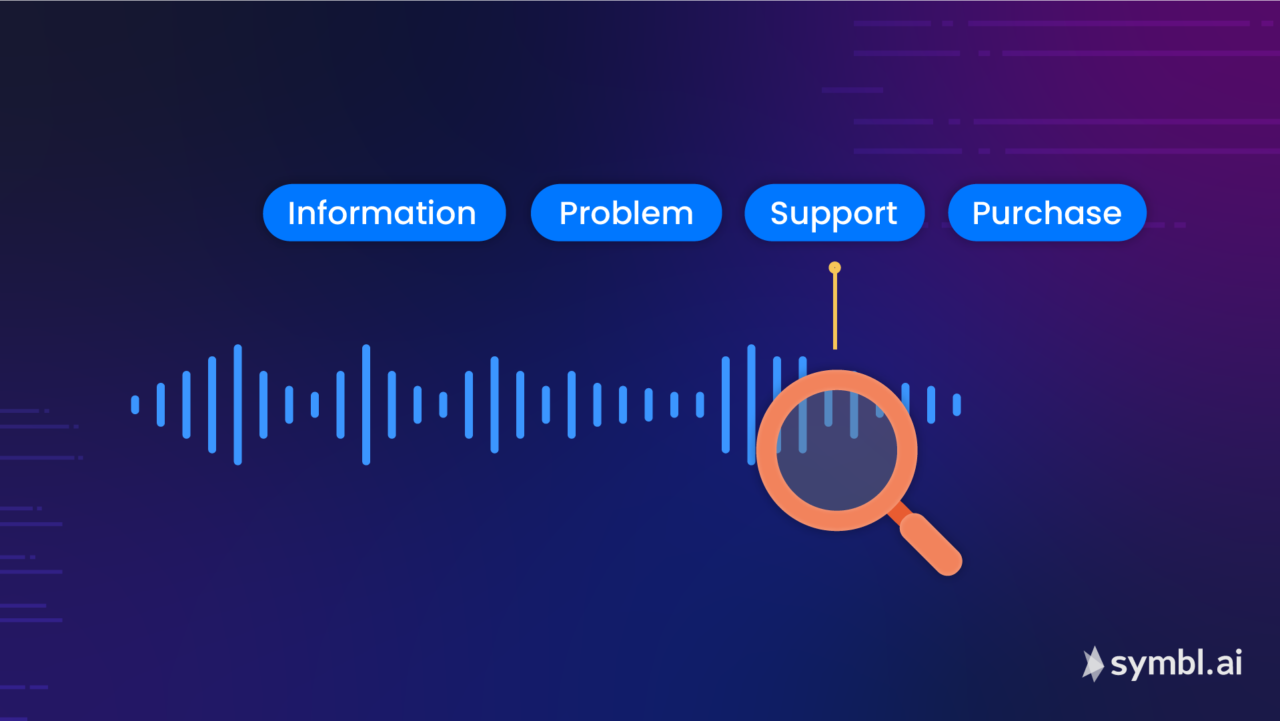

As its name implies, client classification is all about classifying clients based on their intention. This essentially requires associating their searches, audio recordings, video recordings, and/or any text with a category (or categories) that will depend on their aims.

For example, imagine a Netflix customer searching for a romantic movie. Based on their general search, Netflix may have already made some suggestions using NLU. Netflix classifies each customer into categories according to the customer’s interest, which is ultimately what your Suggested for You list is based upon. Each category may be assigned a number for all the times that you have accessed it or searched for a specific movie within that category. Therefore, the customer is provided with a recommended list of romantic movies.

The AI behind this isn’t a perfect science, but it does a good job learning from repeated searches, categories, or frequently watched movies to make a recommendation for a particular client based on their actions within the platform. It’s almost like predicting the future.

Intent Marketing

As we briefly touched on at the beginning of this article, customer feedback on a product can help a business assess product success and features that may need to be added or changed. With intent marketing, companies gather information to better understand what customers enjoy or don’t like about their products. Occasionally, there is an overlap between intent marketing and client classification.

Spam Detection

Another highly common use case for intent analysis is spam detection. It’s been done for quite some time using NLP, and while there are some limiting factors, the improvements that NLU brings are sure to simplify the ability to detect unsolicited content.

In any case, you don’t want to miss important emails, and you don’t want to waste time on spammy ones. Thankfully, your Gmail box is already equipped with an intent analysis algorithm to help make sure the right emails are filed in the appropriate folders.

Some Limitations of Intent Analysis

As we’ve stated, the results of intent analysis can be incorrect from time to time. As it involves using unstructured data, it is hard to infer client’s intent and the details related to that intent with perfection. Furthermore, the client might have missed a punctuation or a comma, and algorithms, to this day, haven’t been able to perfectly replicate human behavior in communication. However, there seems to be some light at the end of the tunnel, as some developers are trying to tweak NLU algorithms to grasp meaning more reliably.

Intent Analysis Pipelines to Consider

To help you start building your pipeline, let’s take a look at some of the best tools currently available that use NLU and/or NLP to gather insight.

Symbl.ai

Finally, Symbl.ai allows you to create custom trackers, which enable you to track vital data with minimal configurations. You can also check how your livestreaming is doing by using Symbl.ai’s Voice API or explore the options available with any of Symbl.ai’s other useful APIs.

Symbl.ai has a free option as well as an inexpensive pay-as-you-go option. Check out their pricing page for more information.

Conclusion

As you have seen, intent analysis helps you get the insight you need in an automated manner. By using AI, you can get a comprehensive overview of unstructured data content, even if it means that an email might get incorrectly categorized every once in a while.

Symbl.ai enables clients to use AI in understanding how to get value from and improve the effectiveness of interactive multimedia. Be sure to check it out to get the most out of your voice and video data. Sign up for a free account and get started today.