Voice applications offer the ultimate in accessibility. From Siri to Alexa, their services and queries are available to anyone; however, getting these apps to work reliably and consistently isn’t easy. Voice apps are unusually demanding, needing high bandwidth and CPU time. This makes them hard to deliver at scale.

Using a serverless platform fixes that, as it allows you to concentrate on your code while leaving server management to the platform holder. It also helps you grow, automatically scaling services.

In this comparison, you’ll learn about the three biggest players in the serverless service industry. You’ll compare the tools based on pricing, features, developer experience, reliability, and scalability.

Let’s take a look at why these categories are so important for the efficiency of a serverless framework.

Pricing

Pricing is always an important factor when it comes to any business. Your costs per customer cut into your profits and need to be kept as low as possible.

Serverless services will charge you for each request, and they’ll also charge for compute time, so if your apps take more CPU resources, your costs increase. These two charges will form the bulk of your costs, aside from paying your developers.

Features

All cloud platforms allow you to run your code without worrying about server management, but they also offer additional features.

Each platform comes with its own set of services and integrations, so it’s important to review these before picking a provider. Once you’ve committed to a specific ecosystem, it isn’t easy to switch.

To help you choose, you’ll learn what unique feature sets each service offers and how they can help your app succeed.

Developer Experience

Choosing a platform that doesn’t get in the way of your developers will keep them happy in the long run. In order to do that, you must have thorough documentation, so your app can be integrated easily. Quickstart guides, API information, and comprehensive support are other important offerings.

You’ll also need to know how easy different services are to set up, configure, and deploy.

Reliability and Scalability

Google Cloud Functions, AWS Lambda, and Azure are all major serverless services. It’s useful to have a guarantee telling you what to expect as a minimum level of service and the repercussions if that isn’t met.

An SLA, or Service Level Agreement, means you have a guaranteed level of service from your provider. This protects you if the service doesn’t meet the required standard, and you’re able to plan for worst-case scenarios in advance.

Scaling up brings difficult challenges. Your infrastructure needs to grow with you, and your service provider plays a big role in determining how your app will cope as your traffic increases.

Serverless Pricing Comparison

Google Cloud Functions provides two million free invocations per month and then charges forty cents per million after that.

Outbound data costs twelve cents per GB, with the first five GB free. Inbound data transfer is free, as is outbound data to Google APIs in the same region.

Compute time is calculated on a range of factors, like your plan and the speed of the CPU you use, but is billed per one hundred ms. The per one hundred ms price ranges from $0.000000231 to $0.000009520, and you get 400,000 GB-seconds free per month.

AWS Lambda gives you a million free requests per month and 400,000 GB-seconds of compute time. After that, it charges twenty cents per million requests.

Compute time varies by region and function memory allocation. In the east Ohio region, a 128 MB service using x86 architecture costs $0.00000021 per one hundred milliseconds, rising to $0.00001667 per one hundred milliseconds for a ten GB allocation.

That’s slightly cheaper than Google at the bottom end, but the top end offers more memory, which is why it’s more expensive.

Note: their site prices them per millisecond rather than per one hundred milliseconds; these figures have been converted for parity.

Azure lets you calculate costs yourself on their website. Like AWS Lambda, you get a million free requests, and 400,000 GB-seconds of compute time per month.

Compute time costs aren’t radically different across services but do vary according to the region and the memory you use. To get an accurate picture of what your costs will be, you need to calculate the typical CPU time per request and then estimate how many requests per month a typical customer will make. You can then multiply these to see how the costs will change.

For example, let’s say you have an app that lets people search for train tickets by speaking and telling the app what stations they want to travel between. Every time someone speaks to the app, it makes a request. This counts towards your quota and will incur a fixed charge. You’ll be charged for CPU time to process the request, as well as charges that vary depending on how demanding it is in processing terms.

If the function handling requests involves natural language processing, the CPU costs will be relatively high compared to other types of applications. If you use the app itself to handle speech recognition and look up the results with a service, CPU usage will be lower; however, that may slow your app down on the front end or use more battery for mobile users.

Figuring out how much time your requests take will help you determine the viability of your offering.

AWS and Azure have roughly identical pricing structures. Google gives you twice as many free requests but doubles the cost for additional requests. The extra free requests it gives cost practically nothing, but if your service scales up, so will your costs.

Serverless Features

All three of these services are part of broader cloud platforms with far more to discuss than can possibly be covered here. AWS and Azure have over 200 services, with Google having about half of that.

They also tend to force you into using those specific services, but there are things you can do to mitigate that, like keeping vendor-specific services in modules or making sure you don’t lean too heavily on features other providers don’t support.

Google Cloud Functions uses open-source FaaS technology. It has a range of APIs, with its cloud vision and video intelligence APIs allowing you to process video and search through them. Its sentiment analysis allows you to understand content in depth.

As for languages, it supports C#, F#, Java, Go, Node.js, Python, Visual Basic, and Ruby.

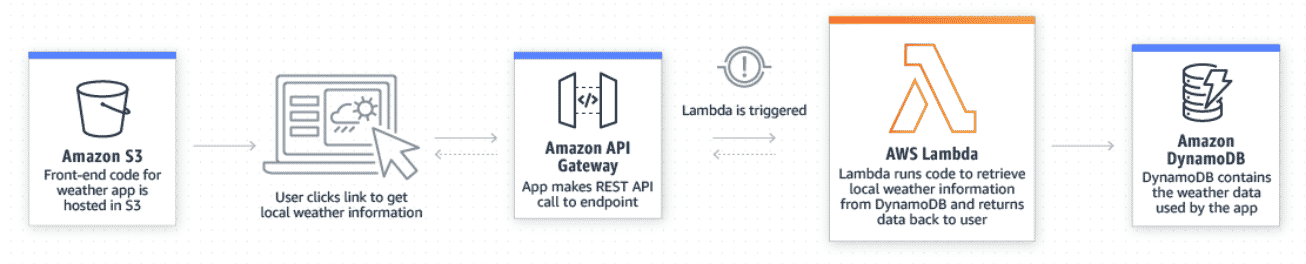

AWS Lambda lets you extend other AWS services with functions that apply to other resources. That could be a stream, database, or Amazon bucket. It offers fast response times, with its “provisioned concurrency” responding to requests in under one hundred milliseconds.

It’s framework agnostic and has native support for Python, Java, C#, Ruby, Go, PowerShell, and Node.js, and its runtime API lets you work with other languages.

A typical setup might involve using AWS API Gateway to map endpoints and AWS Cognito for user management.

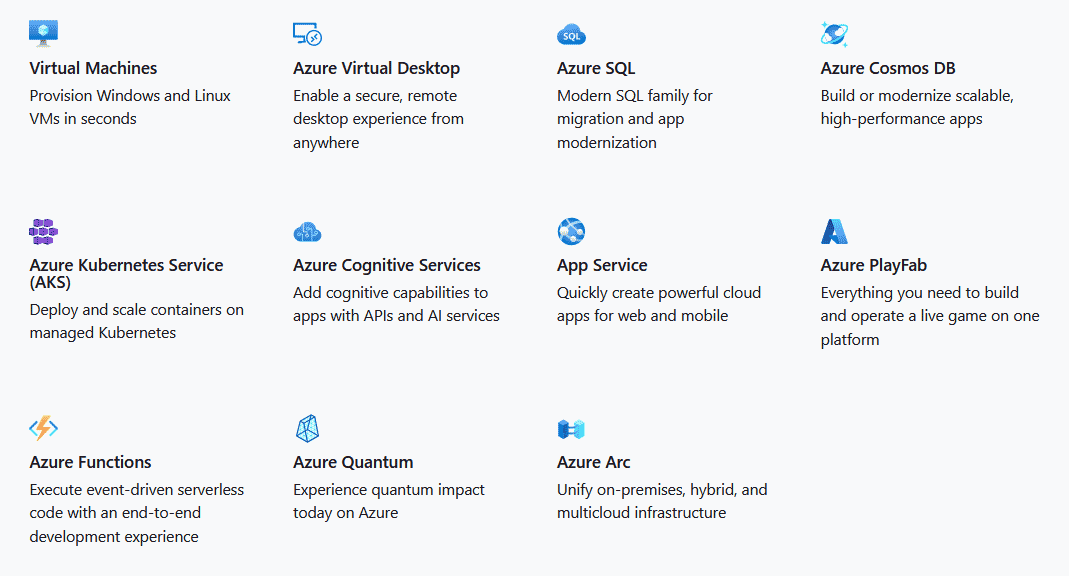

Azure is notable for its flexibility, allowing you to deploy through tools like GitHub, Visual Studio Team Services, and others.

It also has plenty of integrations, including Azure’s various services and GitHub Webhooks.

Azure supports C#, F#, Java, Node.js, PowerShell, Python, and TypesScript.

If you’re working in Java, Node.js, Python, or C#, you can pick any platform, but for other languages, you need to check if support is available.

AWS and Azure have custom systems for using other runtimes. Google allows you to use Docker images.

AWS Lambda works well for async functions, which are useful for processing voice operations that take a lot of compute time. This guarantees message durability.

All the providers offer so much that unless you are tied to a specific language, you need to evaluate them carefully to see if there is anything that fits your specific use case.

Developer Experience

Google Cloud Functions aims to simplify the developer experience, allowing you to focus on code while it handles the infrastructure and scaling for you. It takes fewer steps to deploy than the other services.

Functions take a few minutes to deploy the first time, but are much faster to redeploy. You can deploy from your local machine, GitHub or Bitbucket. Functions are tested automatically, and traffic is directed to the latest version of the function.

Google Cloud Functions also has extensive documentation and tutorials and useful, language-specific quickstarts. Some of its training links lead to sandboxes, although they often took a long time to load.

Despite some flaws in its documentation, Google has a reputation for usability, with an easy-to-use UI. For less experienced teams, it’s a strong choice.

Azure has multi-language support, including open-source languages, and offers help with debugging. It’s also strong on machine learning and automation, which can save you time if you take advantage of them.

Azure lets you run updates across multiple devices at the touch of a button, which is another potential timesaver.

Azure Functions lets you use source code integration for continuous deployment.

You can build and deploy using Azure Pipelines or GitHub Actions, as well as the Jenkins Plugin. Its deployment slots allow you to swap new functions in for existing ones with little to no downtime.

Its documentation has detailed quickstart guides and many tutorials, along with more conceptual documents and videos if you prefer to learn that way.

AWS Lambda works well with other AWS services and also makes management very simple. Deployments are handled using packages that can be either container images or zip files.

You can use CodeDeploy to swap traffic incrementally to updated versions of your functions.

Its documentation includes API references, developer guides for different features, and an operator guide giving a high-level overview.

AWS is long-established, and there are many tools that work with it. For example, sls-dev-tools can help you with metrics and debugging.

It has a range of in-depth services and a huge selection of features and configuration options, as well as security and reliability features; however, all those choices can be overwhelming.

Reliability and Scalability

Google Cloud Functions promises to let you scale from “zero” to “planet scale” without doing anything because everything is handled in the background.

They also have an SLA guaranteeing 99.95 percent uptime every month. There’s a ten percent rebate (against future bills) if it doesn’t manage it, rising to fifty percent if service drops below ninety-five percent.

AWS Lambda also handles maintenance and infrastructure management like Google and includes built-in fault tolerance, which allows you to cope with outages at individual data centers. There is no downtime for maintenance, and it scales automatically, depending on incoming requests.

Their SLA guarantee is similar to Google’s but gives you a full rebate if services drop below ninety-five percent.

Azure Functions has a range of plans depending on how you want your scaling to work.

Azure Functions’ SLA matches AWS Lambda’s SLA, offering you a full rebate if coverage drops below ninety-five percent.

When it comes to starting new instances of services, AWS has a speed advantage over other providers. Its “cold starts” take less than a second, with Google averaging around a second and Azure sometimes taking over five seconds. If you have a service that needs to spin up multiple new instances quickly and respond efficiently, AWS is the best option.

AWS Lambda has a concurrency limit of 1000 per region, with the others having no fixed limit.

Conclusion

Choosing a cloud provider is a major decision that has profound long-term implications for your apps.

The services covered here offer support for different languages, have a huge variety of additional features, and vary in pricing and reliability. Picking the right provider is complicated.

If you’re just setting out, take a look at your business plan and figure out what your app will need in the future. How will the requirements change as it grows? What does success look like? Answering these questions will help you determine which service is the best fit.

Whichever you choose, these services make rapid scaling and growth significantly more achievable.

Similarly, AI voice apps are easier to develop if you take advantage of existing services. Symbl.ai can help your team deliver better customer experiences. Its conversation intelligence lets you utilize voice and video without the need to build machine learning models yourself.